The estimate is in the design

On stacking, discrete outcomes, matched inference, and data-driven controls

Hi there! Apologies for the disappearance! My thesis is due in a month so I was/am busy with that. There are 10 papers to catch up on, so we will start with four of them today and hopefully I can write another post next week.

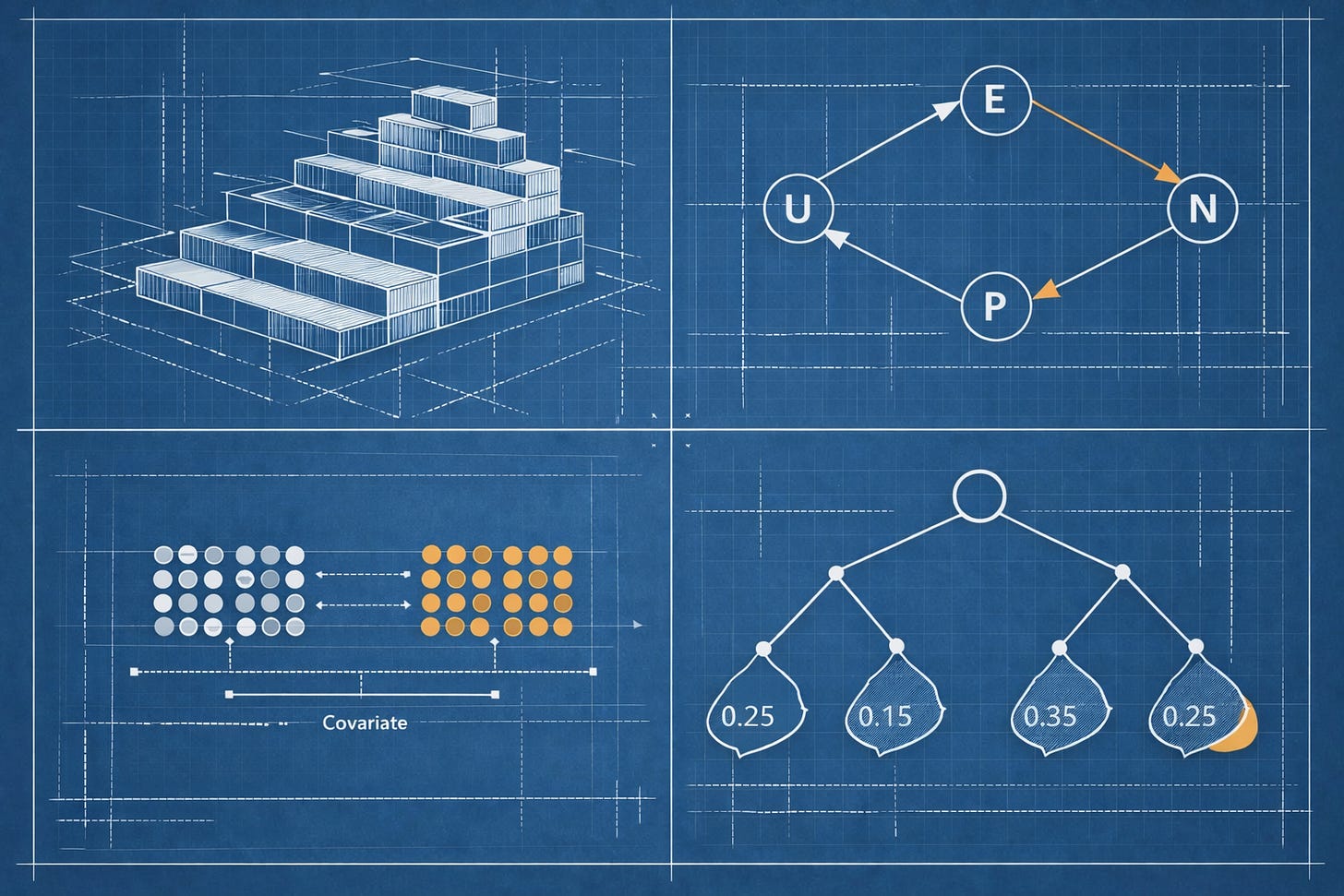

I picked these first four because I see an overall theme in them: DiD looks fairly straightforward in theory, but irl applications tend to make the assumptions do a lot of work, and these papers all show, in different ways, how design, outcome structure, and inference determine what your estimates are identifying in practice. So today’s post is based on:

Stacked Difference-in-Differences, by Coady Wing, Seth M. Freedman and Alex Hollingsworth

Event-Study Designs for Discrete Outcomes under Transition Independence, by Young Ahn and Hiroyuki Kasahara

Inference for Matched Difference-in-Differences, by Mijeong Kim and Mingue Park

Parallel Trends Forest: Data-Driven Control Sample Selection in Difference-in-Differences, by Yesol Huh and Matthew Vanderpool Kling

Stacked Difference-in-Differences

( This is for Prof Khoa :) Also there are lots of info in the footnotes because this topic is important - not that the others aren’t but I feel like applied people may come across this sort of approach/design-based implementation or want to apply it themselves more often than some of the other methods we covered. So just for you to remember, stacked DiD is a regression-based approach used in staggered adoption settings, where different units receive treatment at different times1. It became popular as researchers moved away from standard two-way fixed effects (TWFE) in settings with heterogeneous treatment effects, since TWFE can compare already-treated units with later-treated units in ways that don’t recover a clear causal average2.)

TLD;DR: stacked DiD became popular in staggered adoption settings because it avoids the late-versus-early treated comparisons that caused problems for standard TWFE. This paper shows that the usual unweighted stacked regression still doesn’t have a clear causal interpretation because treatment and control trends are combined with different implicit weights across sub-experiments. The authors define a target parameter - the trimmed aggregate ATT - and show that a weighted stacked estimator lines up with it. The result is a more defensible stacked DiD approach for applied work, with code provided for the weighting procedure.

What is this paper about?

This paper studies the performance of stacked DiD estimators3 in staggered adoption settings. The authors take a trimmed aggregate ATT4 as the target parameter and ask whether stacked regressions recover it. Their main point is that the most basic stacked estimator doesn’t identify that target because it places different implicit weights on treatment and control trends across sub-experiments. As a result, even if each individual sub-experiment satisfies the standard DiD assumptions, the pooled stacked regression5 can’t be given a clean causal interpretation as an ATT. The paper then derives corrective sample weights and proposes a weighted stacked estimator that does identify the trimmed aggregate ATT.

What do the authors do?

The paper moves in a fairly clear sequence. First, the authors define the parameter they want to study: the trimmed aggregate ATT, which is a weighted average of group-time treatment effects over a trimmed set of adoption cohorts. The trimming step is there to keep the composition of the event-study sample stable across the chosen pre- and post-treatment window so that movements in the estimates over event time are not driven by different cohorts entering and leaving the average.

They then turn to the standard unweighted stacked regression and ask whether it recovers that target parameter. Their answer is no :) The problem is not that the individual sub-experiments are invalid on their own. The problem is that, once they are pooled into one stacked regression, treatment and control trends are combined with different implicit weights across sub-experiments. Because of that the basic stacked estimator doesn’t identify the trimmed aggregate ATT, or any other average causal effect in general.

The next step is the main constructive part of the paper. The authors derive corrective sample weights and use them to build a weighted stacked DID estimator. With those weights in place, the stacked regression does recover the trimmed aggregate ATT. They show that this can be implemented through weighted least squares, either in a simple DID setup or in an event-study specification. They also note that the same logic can be adapted if the researcher wants a different aggregate such as a population-weighted version or a sample-share-weighted version.

After that, the paper looks at stacked fixed-effects specifications that had already been used in applied work. This part is useful because many of you will have seen exactly those regressions in practice. The authors show that these fixed-effects versions run into the same basic problem: in general, the unweighted specification doesn’t identify the target aggregate either. Once the corrective weights are added, that problem is resolved and the fixed effects themselves become unnecessary for identification. One of the paper’s broader points is that a simpler weighted event-study specification is enough.

The final step is inference. Because stacked datasets can reuse the same groups across multiple sub-experiments, the paper discusses how standard errors should be handled and compares clustering approaches. The authors run a small Monte Carlo exercise and find that clustering at the group level and clustering at the group-by-sub-experiment level both perform reasonably well when the number of clusters is not too small. So the paper is not only about identification but also gives us some guidance on implementation, which is always welcome.

Why is this important?

This paper is important because stacked DiD was already being used in applied work yet the literature hadn’t clearly established what the standard stacked regression identified. That is a problem if researchers are treating the coefficient from a stacked regression as a causal average without being able to say what average it is (isn’t this always a problem?). One of the paper’s main contributions is to show that the usual unweighted stacked regression doesn’t, in general, identify the target aggregate, or any other convex combination of causal effects.

It’s also important because the paper deals directly with a common practical use of event studies. We often want to look at treatment effects over time and use the pre-period to check for possible violations of the DiD assumptions. The authors show why that can go wrong if the composition of the sample changes across event time. Their trimmed aggregate ATT is built to avoid that problem so that changes in the event-study line reflect treatment dynamics in the post-period or differential pre-trends in the pre-period, rather than different cohorts entering and leaving the average at different horizons.

There is also a practical gain for applied researchers. The paper’s weighted stacked approach is still regression-based, which makes it relatively easy to implement and explain. The authors are quite explicit on this point. They argue that stacked estimation is attractive for applied work because it’s regression-based, keeps attention on the underlying research design and doesn’t rely on extra modelling assumptions beyond the standard DiD setup. They also show that the weighted estimator gives coefficients that correspond to a well-defined average of group-time ATT parameters, which gives us a clearer rationale for using the method.

Finally, this paper is useful because it speaks directly to what people were already doing. The authors discuss earlier applied papers using stacked DiD and show that the stacked fixed-effects versions used there run into the same general identification problem when left unweighted. They show that a simpler weighted event-study specification is sufficient to identify the trimmed aggregate ATT. By contrast, the more complicated fixed-effects setup does not solve the core issue on its own. For readers who may want to use stacked DiD in their own work, that is a very practical takeaway.

Who should care?

Applied researchers may want to use stacked DiD in staggered adoption settings, where different units are treated at different times and the aim is to compare each treated cohort with clean controls that remain untreated over the relevant event window. This can come up in labour, health, public, education and other applied fields where policies are rolled out in stages across places or organisations.

Do we have code?

Yes. The authors provide a GitHub repo with example code for calculating and implementing the weights used in their stacked DiD approach.

In summary, this paper gives stacked DiD a much clearer foundation. Wing, Freedman and Hollingsworth show that the standard unweighted stacked regression doesn’t have a clear causal interpretation in staggered adoption settings even when the underlying sub-experiments satisfy the usual DiD assumptions. They then define a target parameter, the trimmed aggregate ATT, and show that a weighted stacked estimator can recover it. For applied researchers, the takeaway is fairly straightforward: stacking the data is only part of the job. You also need to think really carefully about which cohorts enter the sample, which units count as clean controls and how the regression weights are constructed. That makes this a very useful paper for anyone who may want to use stacked DiD in practice, or who wants a clearer link between the regression they run and the parameter they claim to estimate.

Event-Study Designs for Discrete Outcomes under Transition Independence

(Young Ahn is a 5th-year Ph.D. Candidate at UPenn, but he will soon start as a postdoc at Brown. All the best!)

TL;DR: this paper asks how to do DiD or event-study analysis when the outcome is discrete, such as employment status, complaints, or patenting. The authors argue that standard PT can be a poor fit in these settings, so they propose transition independence and then add a latent-type Markov structure to handle unobserved heterogeneity and short panels. In their applications, this leads to estimates that differ markedly from conventional DiD.

What is this paper about?

This paper is trying to solve a basic identification problem in DiD with discrete outcomes6. Standard DiD relies on PT, but that logic can be a poor fit when outcomes are bounded and evolve through transitions across categories7. The authors’ point is that this isn’t a minor technical issue. With discrete outcomes, differences in baseline distributions can generate mean reversion even in the absence of treatment, binary outcomes can imply impossible counterfactual probabilities and for multi-category outcomes the notion of a single trend becomes hard to pin down. The paper proposes transition independence as an alternative identification strategy8, and then adds a latent-type Markov structure to deal with unobserved heterogeneity and short panels9. The broader aim is to give researchers a way to do event-study or DiD analysis with discrete outcomes without leaning on a PTA that may be “incoherent” in this setting.

What do the authors do?

They set up a potential-outcomes framework for discrete outcomes and show how the ATT can be identified under transition independence. The basic idea is to construct the treated group’s counterfactual using control-group transition probabilities rather than mean outcome trends. They then relate this to conventional DiD, show that transition independence is equivalent to a version of conditional PT based on the full pre-treatment outcome history, and derive the bias of DiD under their framework. To make the approach workable in practice, they introduce a latent-type Markov structure, establish identification of latent-type-specific treatment effects and the overall ATT, and then develop an estimator. Finally, they apply the method to the Dodd-Frank Act, Norway’s patent reform, and the ADA. In all three cases their results differ substantially from conventional DiD10.

Why is this important?

This paper is important because discrete outcomes are everywhere in applied work, yet DiD is still often used as though the usual PT logic carries over without much trouble. The authors show that this can lead to badly misspecified counterfactuals and, in turn, misleading treatment effects. Their alternative gives researchers a way to build counterfactuals that respects the bounded, transition-based nature of discrete outcomes. The applications make the stakes clear: in one case DiD implies complaint rates below zero, in another it overstates a negative effect, and in the ADA application the transition-based framework also shows which channels are driving the employment effect. So the paper is useful both methodologically and empirically, because it presents a different identification strategy for a common class of outcomes and shows that the choice of strategy can change the substantive conclusion.

Who should care?

This paper should be useful for researchers working with outcomes like employment status, unemployment, labour-force participation, occupational choice, complaint filing, patenting, disability status, or other bounded categorical variables. It is directly relevant in settings where the outcome moves across states over time and researchers might still be tempted to use a standard DiD or event-study design on binarised outcomes.

Do we have code?

Yes. The authors provide a standalone R package with replication codes, available as bayesiahn/ak.

In summary, Ahn and Kasahara argue that standard DiD can be a poor fit for discrete outcomes because the counterfactual is built from mean trends in settings where outcomes are bounded and evolve through transitions across states. Their solution is to replace PT with transition independence and then use a latent-type Markov structure to make the framework workable with unobserved heterogeneity and short panels. The paper gives applied researchers a different way to study treatment effects with discrete outcomes, and the empirical applications show that it can lead to very different conclusions from conventional DiD.

Inference for Matched Difference-in-Differences

TL;DR: this paper studies inference in matched DiD and shows that standard variance estimators can be too conservative once matching induces dependence within treated-control pairs. Kim and Park propose a projection-based variance estimator that removes the variation explained by the matching covariates and targets the correct design-based variance instead. In their simulations it performs much better than standard unit-level or pair-level procedures in informative matching designs - but watch out: when matching is uninformative (R²_Z ≈ 0), the projection step overcorrects and the estimator undershoots, so standard pair-cluster inference is the safer default in that setting.

What is this paper about?

This paper is about inference in matched DiD11. Kim and Park start from a simple point: matching is often used in DiD to improve covariate balance, but once we match treated units to controls, the design itself creates dependence within matched pairs12. Standard variance estimators usually ignore that feature, so they target the wrong variance. In this setting, the consequence is systematic overestimation of standard errors1314. The paper’s goal is to fix that. More specifically, the authors study the variance implied by the matched design and show why standard unit-level and cluster-level inference can be too conservative once matching is built into the estimator. They then propose a projection-based variance estimator that removes the component of variation explained by the matching covariates and is designed to recover the relevant design-based variance15. The broader aim is to show that, in matched DiD, getting the point estimate is only part of the job. You also need a standard error that reflects the dependence structure created by the matching step.

What do the authors do?

Kim and Park begin by setting up a matched DiD framework in which each treated unit is matched one-to-one, without replacement, to a control unit using pre-treatment or time-invariant covariates. They then write the matched DiD estimator in an asymptotic linear form and use that setup to show what variance target the matched design implies. The next step is to explain why standard inference goes wrong. The paper shows that matching creates positive covariance within matched pairs because treated units and their matched controls share a common component linked to the matching covariates. Once the estimator is differenced, that covariance reduces the true variance. Standard unit-level CRVE16 ignores this term, so it overstates uncertainty, and pair-level clustering only partly fixes the problem because it still doesn’t use the covariate structure that generated the dependence.

Their solution is a projection-based variance estimator. The basic idea is to take the within-pair sum of first-differenced residuals, project it onto the matching covariates and remove the variation explained by those covariates. This yields an adjusted variance estimator designed to recover the design-based variance implied by the matched sample. They also show that the estimator can be written as a scaled version of the pair-cluster variance estimator where the scaling factor depends on how much of the within-pair variation is explained by the matching covariates.

They then study finite-sample performance in Monte Carlo simulations. The results show that standard unit-level and pair-level variance estimators are too conservative in informative matching designs, while the projection-based estimator comes much closer to the Monte Carlo benchmark.

Why is this important?

This paper is important because matched DiD is widely used to improve comparability between treated and control units, yet the matching step also changes the dependence structure of the estimator. Kim and Park show that standard variance estimators can then become systematically too conservative because they miss the variance reduction created by matching-induced covariance within pairs. It is also important because the paper offers a very concrete correction. The projection-based estimator adjusts inference using the same matching covariates that created the dependence in the first place. The simulation results show that this performs much better than standard unit-level or pair-level procedures in informative matching designs.

Who should care?

This paper should be useful for researchers using matched DiD to improve covariate balance before estimation. It’s really relevant in settings where one-to-one matching is used and inference is reported with standard cluster-robust standard errors without much discussion of what variance is being estimated17. More broadly, it should interest applied researchers who use matching and then run DiD as though the inference problem were unchanged by the design step.

Do we have code?

Not yet. Will update if this changes.

In summary, Kim and Park show that, in matched DiD, the matching step changes the variance structure of the estimator in a way that standard inference usually ignores. Because matching induces positive covariance within matched pairs, standard unit-level and pair-level variance estimators can become too conservative. Their solution is a projection-based variance estimator that adjusts for the dependence created by the matching covariates. The paper’s main message is simple: once matching is part of the design, the standard errors need to reflect that design too.

Parallel Trends Forest: Data-Driven Control Sample Selection in Difference-in-Differences

TL;DR: this paper proposes parallel trends forest, a machine-learning method for choosing control units in DiD when treatment assignment has little randomness and there are many plausible controls. Instead of fixing the control sample by hand, it uses pre-treatment data to build a weighted control for each treated unit that moves as closely in parallel as possible.

What is this paper about?

This paper is trying to solve a very practical problem in DiD: when treatment assignment has little randomness and there are many possible control units, how do we choose the control sample in a disciplined way? The authors’ answer is parallel trends forest, a data-driven method that uses pre-treatment data and machine learning to construct, for each treated unit, an optimal weighted control sample18 that moves as closely in parallel as possible before treatment19. The broader concern is familiar. In many DiD applications, we don’t have random treatment assignment, so we choose a control group that looks “reasonable”, inspect the pre-treatment series, and hope the PTA is credible. Huh and Kling are trying to improve on that workflow. Their method uses random forests20 to search over a large set of covariates and control units rather than relying on researcher judgment alone or on a standard TWFE regression with a fixed control sample21.

The paper is also aimed at a specific type of setting: relatively long panels with noisy, granular and sometimes non-normal data. The authors argue that existing methods such as SC, synthetic DiD and matrix completion can struggle there, either because they fit poorly, overfit in-sample22 or don’t adapt well to a large and chaotic donor pool23 (which is true). Parallel trends forest is meant to work better in exactly those cases. The main goal is to give us a more reliable way to build a control sample before running DiD, especially when the design is useful but far from clean.

What do the authors do?

They start by formalising the problem: for each treated unit, they want to find a weighted combination of control units that would have moved in parallel with it in the absence of treatment. That means the method constructs an optimal weighted control for each treated unit using pre-treatment data.

They then define a new measure of deviation from parallel trends24. Instead of relying on the usual sum of squared errors, they use a placebo-style criterion based on how large the estimated treatment effect would be if treatment were falsely assigned at different dates in the pre-treatment period. This is meant to work better with noisy, granular and non-normal data.

The next step is the machine-learning part. They build trees using this deviation measure, split units only along the unit dimension and then combine many trees into a parallel trends forest. From that forest, they recover weights for each treated-control pair based on how often they end up in the same leaf. Those weights are then used to construct the counterfactual for each treated unit and, from there, the ATT.

After laying out the method, they compare it to SC, synthetic DiD and matrix completion using a placebo exercise and Monte Carlo simulations. In their setting, they find that the parallel trends forest tracks the treated sample more closely and performs better than the existing methods. They then apply the method to the rollout of post-trade transparency in the corporate bond market. They use it to study the effect of TRACE Phase 2 on bond turnover, compare the results to TWFE estimates using several different control samples and then also show how the method can be combined with the Rambachan-Roth framework to allow for bounded deviations from parallel trends25. They also introduce an “honest”26 version of the forest to reduce overfitting.

Why is this important?

A few reasons. This paper is important because control-sample choice is often one of the weakest parts of applied DiD. In many settings we don’t have much randomness in treatment assignment so the credibility of the design depends heavily on which controls are chosen. Huh and Kling turn that choice into an explicit data-driven step rather than leaving it to researcher judgment alone. It is also important because the paper is aimed at a setting where many existing methods struggle: long panels with many candidate controls, noisy outcomes and granular data. The empirical application makes the point clearly. In their TRACE setting, different “reasonable” control samples produce quite different TWFE estimates, sometimes even with different signs. Their method gives a smaller estimate of the effect of transparency on turnover and once they allow for bounded deviations from parallel trends, the effect is not statistically significant.

Who should care?

Anyone working in settings where there are many plausible controls, little randomness in treatment assignment and enough pre-treatment data to compare how units move over time. That makes it relevant for work on phased policy rollouts, regulatory changes, market-design reforms and other settings where the main practical difficulty is choosing a credible control sample rather than writing down the DiD regression itself.

Do we have code?

No yet. Will update if anything changes. The method is described well enough to attempt a reconstruction, but not enough to call it fully reproducible out of the box.

In summary, Huh and Kling take one of the messiest parts of applied DiD - choosing the control sample - and turn it into an explicit data-driven step. Their method is built for settings with long panels, large donor pools and noisy data, where standard tools can fit badly or become very sensitive to which controls the researcher picked. The broader contribution is practical: rather than asking readers to accept a “reasonable” control group on faith, the paper offers a structured way to search for one using the pre-treatment data itself.

In stacked DiD, the we construct a separate sub-experiment for each treatment cohort using that cohort as the treated group and a set of clean controls, then pools those sub-experiments into one stacked dataset for estimation.

The appeal of this approach is that it avoids the late-versus-early treated comparisons that created problems for conventional TWFE in staggered adoption designs under treatment effect heterogeneity. In the paper the authors note that versions of stacked DiD were already being used in applied work before this formal treatment.

Plural because there are 3: the basic unweighted stacked estimator, the weighted stacked estimator they propose, and the stacked fixed-effects specification used in earlier applied work, which they also evaluate.

The trimmed aggregate ATT is a weighted average of cohort-specific treatment effects at each event time in a staggered adoption design, computed after trimming the sample so that the same cohorts contribute across the chosen event window. This keeps the event-study graph from being driven by cohorts entering and leaving the average at different horizons.

A pooled stacked regression is just the regression you run after you have built all the sub-experiments and stacked them into one dataset, so instead of estimating a separate DiD for each treatment cohort, you create a sub-experiment for each valid adoption cohort, then append those sub-experiments into one stacked file, and finally run one regression on the pooled stacked dataset.

By “discrete outcomes” the authors mean outcomes that take a limited set of categories, such as employed, unemployed or out of the labour force, or which occupation a worker is in. These are settings where we often still use DiD, usually by turning categories into binary indicators.

The paper argues that PT can fail in discrete settings for three reasons: mean reversion can create different trends when groups start from different baseline distributions, binary outcomes can imply impossible counterfactual probabilities 0 < P or P > 1, and for multi-category outcomes the notion of a single “trend” isn’t well defined.

Transition independence means that, absent treatment, treated and control units with the same pre-treatment outcome path would have the same transition dynamics. So the counterfactual is built from transition probabilities rather than from average outcome trends.

To allow for unobserved heterogeneity, the authors assume that units belong to latent types with different transition dynamics, and that outcomes follow type-specific Markov processes. This is what lets them recover both latent-type-specific and aggregate treatment effects from short panels.

Funnily enough, it’s all due to the identification strategy :) The two methods are building different counterfactuals, and in these applications the usual DiD counterfactual is a poor fit for the DGP. The paper’s general argument is that with discrete outcomes, conventional DiD can go wrong for 3 main reasons: baseline differences create mean reversion, binary outcomes can generate impossible probabilities, and multi-category outcomes are driven by transitions across states rather than a single smooth trend. In the 3 applications, each one highlights a different version of that problem. In the Dodd-Frank case, the DiD counterfactual complaint rate falls below zero, so the estimates differ because DiD is extrapolating linearly in a setting where probabilities are bounded. Their transition-based method avoids that by construction since it works with transition probabilities rather than mean trends. In the Norway patent reform case, the treated group starts with a much higher patenting rate than the control group, so there is more room for patenting to fall “mechanically”. DiD reads that decline as treatment, while the authors argue that much of it is mean reversion. Once they model the transition dynamics directly, the estimated effect moves much closer to zero. In the ADA case, the issue is that level comparisons hide what is happening in the underlying transitions. The paper says DiD misses statistically significant employment effects because pre-treatment level differences mask the transition dynamics, whereas their method can trace flows across labour-force states and shows that the negative employment effect is operating through specific exit channels (mainly transitions from employment into out-of-labour-force status). The estimates are so different because the paper replaces the identification strategy. DiD uses PT in outcome levels. Their method uses transition independence and constructs counterfactuals from transition probabilities conditional on pre-treatment histories. When the DiD counterfactual is badly misspecified, the resulting treatment effects can move a lot, and sometimes even flip sign.

Matched DiD combines matching with a DiD setup: treated units are first matched to similar control units using observed covariates and the DiD estimator is then computed on the matched sample. The attraction is better covariate balance and - in principle! - a more credible comparison group.

The key point is that matched treated and control units are deliberately chosen to be similar on the matching covariates, which then creates positive correlation within matched pairs since both units share a common component related to those covariates.

Here “conservative” means the standard errors are too large. The paper’s argument is that standard variance estimators miss the variance reduction coming from the positive covariance within matched pairs, so they systematically overstate uncertainty.

Abadie and Imbens (2008) identified the same mechanism in paired experiments and proposed a resampling-based correction. Kim and Park’s contribution is a closed-form fix that targets the same problem specifically in matched DiD designs.

The proposed estimator works by projecting the within-pair error sum onto the matching covariates and removing the part explained by them. Intuitively, this filters out the variation induced by the matching design and leaves the idiosyncratic component relevant for variance estimation.

Standard unit-level CRVE means the usual cluster-robust variance estimator applied at the unit level, treating each unit as independent from the others and allowing correlation only within the unit over time. In this paper the problem is that this estimator ignores the extra dependence created by the matching step, so it misses the covariance within matched pairs and ends up overstating uncertainty.

The framing here draws on prof Wooldridge (2023)’s “What is a standard error?”, which argues that the validity of any standard error depends on correctly specifying the variance target relative to the underlying source of randomness. Worth reading alongside this paper.

Instead of picking one control group for the whole treatment sample, the method builds a weighted control for each treated unit. The weights are based on which control units end up in the same leaves as the treated unit across many trees in the forest.

Parallel trends forest is a machine-learning method that builds an optimal control sample for each treated unit using only pre-treatment data. It assigns weights to control units so that the weighted control outcome moves as closely in parallel as possible with the treated unit before treatment.

Random forests let the method work with many covariates and many potential control units. They also automate covariate selection so the algorithm can use the variables that really help predict which units move in parallel instead of relying only on what the researcher guessed would matter.

The paper is motivated by settings with little or no randomness in treatment assignment. In those cases, we often choose controls by hand, compare pre-treatment trends visually, and then run DiD. Huh and Kling are trying to replace that partly ad hoc step with a data-driven selection rule.

Synthetic control actually does the opposite of fitting poorly, it overfits in-sample very closely but then performs badly out-of-sample. Synthetic DiD and matrix completion fail for a different reason: they assign near-equal weights across the whole control pool rather than upweighting the units that actually track the treated series.

In the paper the authors include earlier-treated units - bonds that became transparent before the sample window - in the donor pool. They argue these would organically receive near-zero weight if their trends have already diverged from the newly-treated bonds by the start of the sample period, which serves as a data-driven answer to the Goodman-Bacon contamination concern.

The paper doesn’t use the usual sum of squared pre-treatment errors as its objective. Instead, it defines a placebo-style measure based on how large the estimated treatment effect would be if treatment were assigned at different dates in the pre-treatment period.

The paper doesn’t derive analytical standard errors for the parallel trends forest estimator, and this is explicitly flagged as outside scope. The convergence analysis (Figure 8 in the paper) shows the weight estimates stabilise quickly across trees, but that addresses estimation variance, not inferential uncertainty more broadly. Do keep this in mind before reaching for the method.

The “honest” version of the forest follows the logic in Wager and Athey: one sample is used to grow the tree and another is used to estimate the weights. The aim is to reduce overfitting to pre-treatment noise.